After two decades of work, the IHE PIX/PDQ committee deliberately left patient merge semantics implementation-defined. The standard says merges happen inside a Patient Identity Domain, but stops short of specifying what a merge does — leaving "responsibility for quality and management of patient demographics information within each Patient Identity Domain". That is not a hole in the spec — it is an admission. There is no single correct merge.

IHE is not alone. A recent industry review calls merge operations "scattered SOPs rather than a unified, findable framework" — no standards body, no regulator has closed the gap. ONC and CMS mandate audit trails and keep pushing matching rates higher; neither prescribes the merge procedure itself. It is the same admission IHE made, one layer out.

Our previous post ended by promising $unmerge. That promise still stands, but one question was left hanging: why should merge and unmerge work this way in the first place? This post closes that gap. It is about why a server-driven merge cannot work, no matter how clever the server.

What the FHIR spec gives you today

FHIR R5 defines Patient/$merge at maturity level 0. Inputs: source-patient, target-patient, optional result-patient. The server is expected to deactivate the source, copy identifiers, and update references. How it does each of these is left to the implementation. The operation has not moved past maturity 0 since R5 shipped.

The reason is not lack of effort. It is that every part of "merge" is a policy decision, and policies vary structurally — across vendors, across deployments, across jurisdictions.

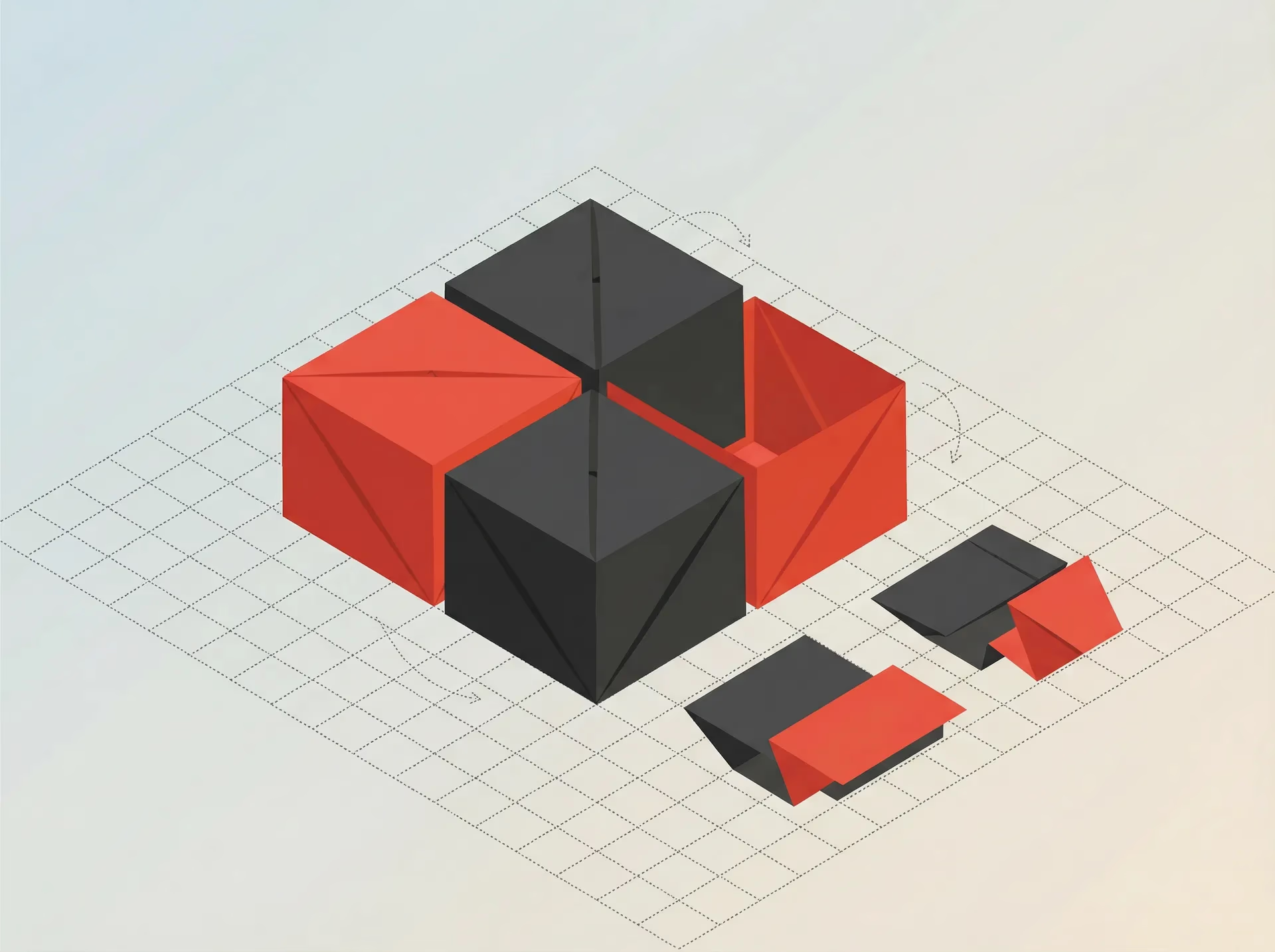

Merge and link are points on the same spectrum

Before the axes, a reframing. Merge and link get lumped together as rival operations, but they are points on the same spectrum.

At the link end, both records survive, references stay where they point, both remain active, and downstream data stays attached to each. At the merge end, survivorship decides which fields win, references are rewritten, the source is deactivated or deleted, and derived data needs reconsideration. Real implementations sit between those poles: CommonWell grades link strength explicitly; Oracle Health Millennium keeps the source inactive but addressable by ID; VistA hard-deletes; NHS PDS retires the old identifier and redirects historical lookups. The more an implementation behaves like a link, the less it commits to about surviving state. The more it behaves like a merge, the more policy it has to take on.

The four axes below define where an implementation sits on that spectrum. They are not minor variations inside "merge, the operation" — they are what separates link from merge. Once each axis has multiple legitimate answers, the combinations blow up fast.

Four axes of variability

Survivorship — which fields survive

When two patient records are combined, dozens of fields conflict: name, address, phone, identifiers, allergies, problem list, medications, code status. Which value wins is the survivorship rule.

Every serious MPI vendor exposes this as configurable, not hardcoded:

- IBM InfoSphere MDM ships four distinct merge-party survivorship rules as pluggable Java classes, triggered during suspected-duplicate detection and explicit party collapse. The rule is a configuration knob, not a hardcoded algorithm.

- Verato offers Auto-Steward and Smart Steward as distinct stewardship products: one resolves duplicates automatically against a reference database, the other provides AI-assisted recommendations for human stewards — two different answers to "can this decision be automated at all".

- Smile CDR, a FHIR-native MDM, makes survivorship a JavaScript handler the client writes. Helper utilities

mergeAll()andreplaceAll()are provided; the handler composes them or implements its own field-level logic. There is no default — the client is forced to make the policy decision explicit.

If a single correct survivorship strategy existed, vendors would have hardcoded it. They did not, because it does not.

The cost when the policy gets it wrong is immediate. An AHRQ PSNet case describes a patient whose contrast allergy was removed during reconciliation because a clinician judged it "not a true allergy". The EHR then displayed "no known allergies" — and the patient was subsequently scheduled for a contrast CT. Same class of failure as a naive merge: a "discard sources that look low-quality" rule quietly erased clinically load-bearing data. A different survivorship policy — never drop an allergy, always flag for review — would have caught it.

ECRI's patient-identification guidance names the directional preference directly: "duplicate records are preferable to erroneously merged records." Err toward keeping records apart rather than combining them. The rule cannot be encoded generically, because "erroneous" is defined by the clinical context the server does not see.

Reference handling — what to do with pointers to the source

Every merge has a second question: dozens or thousands of resources reference the source patient — Encounters, Observations, Claims, Appointments. What happens to those references?

Public implementations give different answers:

- Oracle Health Millennium (Cerner) returns a combined patient as inactive with a link to the current patient, and documents that patient uncombine is supported.

- VistA (VA) moves data from FROM to TO, repoints affected files, and eliminates the FROM record as an active record. If a field is populated in

FROMand empty inTO, that value is merged automatically; to end up with an empty result instead, the source value has to be deleted outside the merge workflow. - CommonWell Health Alliance, a US-wide health information exchange, models identity as links across organizations rather than a single merged survivor record. Those links are graded with Levels of Link Assurance (LOLA).

- NHS England's PDS merges confirmed duplicate NHS numbers into one surviving number, and its API guidance documents how superseded NHS numbers return a replacement number.

Four shapes, four different positions on the link↔merge spectrum. Some keep both records and resolve identity through links. Some repoint every reference to the survivor at merge time. Some keep the source as an inactive record that clients can still resolve. Others rely on a separate pointer mechanism between the old and current identity. All are defensible in their own context.

Source disposition — what becomes of the source record

Reference handling is about inbound pointers. Source disposition is different: what happens to the source record itself after the merge? The FHIR admin-incubator spec enumerates two permitted outcomes in one sentence — "a GET on the source Patient resource ID will return either 200 OK (inactive, replaced-by populated) or 404 not found (when the merge system deleted the resource)". Real deployments add more variants on top of that:

- Inactive with

replaced-bylink. Oracle Health Millennium uses exactly this model: the source is returned as inactive and linked to the current patient, addressable only by direct ID lookup, never via search. - Hard-deleted. VistA moves data from the FROM record to the TO record, repoints affected files, and eliminates the FROM record as an active record. A later GET sees nothing.

- Superseded identifier, new canonical number. NHS England's PDS retires the old NHS number as

supersededand issues a replacement; historical lookups are redirected to the survivor rather than the source record itself being retained. - Obsolete-but-tracked in a separate state machine. IBM Initiate keeps the obsolete member with its own row and linkage type

Merged, carrying the survivor EID. The source is neither active nor gone — it sits in a distinct lifecycle state so the MDM can still reason about it.

Layered on top of this are jurisdiction-specific retention and erasure rules — GDPR Article 17 in the EU, 42 CFR Part 2 segmentation in the US, state-level minor-consent statutes — each of which constrains what the source record can or must become. The US federal stack shows the same pattern by omission: ONC and CMS require auditability and data quality, while efforts like the MATCH IT Act reintroduced in March 2025 and TEFCA push identity quality upstream. They try to reduce the need for merge; they still do not prescribe the merge procedure itself.

Downstream effects — derived artifacts and follow-on work

Merging the patient record is only the first-order problem. The second-order problem is everything derived from the record that does not mechanically follow a reference rewrite: linkage graphs inside the MDM, cumulative calculations like radiation dose or cumulative opioid totals, third-party caches. Two public examples make the point:

- IBM Initiate documents that when member C is merged into a new entity, members A and B previously linked to C do not automatically follow. They each get a new EID and go back through the comparison process. The merge does not transitively close over the link graph — the MDM re-evaluates, and some previously-linked records may end up attached somewhere else or not at all.

- Epic's public EHI documentation reserves an explicit review workflow on lifetime-dose history post-merge. The

PAT_LIFEDOSE_HXtable has dedicated columns for who reviewed the entry after merge/unmerge, when, and a review-reason enum that includesPatient Merge,Patient Unmerge,Patient Contact Move, with statusesNeed Review,Accepted,Rejected. For cumulative clinical calculations, automated distribution is treated as unsafe — a human has to adjudicate what belongs.

The common thread: some post-merge artifacts cannot be rewritten in the same transaction. They need re-evaluation (MDM link graph), routing to a review queue (cumulative dose), or reconciliation against external systems that cached the old identity. A server that treats merge as "flip references and move on" silently drops this class of work on the floor.

Where the line sits

Notice the pattern. Server-side merge wants to be one algorithm. Reality is at least four independent policy axes, each with multiple shipped variants. That is a combinatorial explosion before regulatory overlays even enter the picture.

The way out is not a smarter server. It is moving the policy to where the policy lives — the client. In our $merge, the client sends a transaction Bundle describing every PUT, POST, and DELETE. The server owns the invariants: atomicity, audit, anti-circular-merge checks, optimistic locking via ifMatch. The client owns the policy: which fields survive, how references are rewritten, what happens to the source, and what downstream work needs routing to a review queue.

The audit trail is not a nice-to-have. ECRI's patient-identification toolkit and CMS's documentation rules effectively require the same record: who performed the merge, when, why, a pre-merge snapshot of both records, and the list of affected resources. Mapped to FHIR, that is a Task (operation, actor, reason, linked source/target), a Provenance entry on every touched resource, and the FHIR History API for the pre-merge snapshot — all written in the same transaction as the merge itself. Split any of this across transactions and the audit stops being a single truth.

Unmerge raises the same question to a higher power. When the merge happened on Monday and three new Encounters were posted to the target on Tuesday, where do those go on Wednesday — back to the restored source, distributed, or kept on the target? When the source was hard-deleted, can it be recreated at all? There is no general answer. So our $unmerge mirrors $merge: server owns invariants, client owns policy — the only shape we know how to make safe at this scale. We will walk through the design directly in the next post.

Want to try client-driven merge in your project? MDMbox is available today.