Why Performance Matters

Performance directly impacts both user experience and operational costs. End users need fast access to data during their healthcare journey — every millisecond of delay compounds across thousands of daily interactions, affecting clinical efficiency and patient outcomes. Beyond UX, performance drives infrastructure costs: database and backup size, compute resources, and maintenance overhead all scale with data volume.

When choosing a FHIR server, performance is one of the most important factors. Each system built on top of FHIR — EHR/PHR, CDR solutions, analytics platforms — has different workload patterns and requires different performance characteristics. But for a generic FHIR server, three core workloads are universal:

- CRUD — create, read, update, delete individual resources

- Batch processing — bulk import, data exchange, and integration scenarios

- Search — querying resources by various parameters

FHIR batch processing APIs are commonly used in data exchange and integration — for example, migrating data from legacy systems into a FHIR server. CRUD and search operations power OLTP workloads: building EHR/PHR systems, patient-facing applications, and clinical decision support tools.

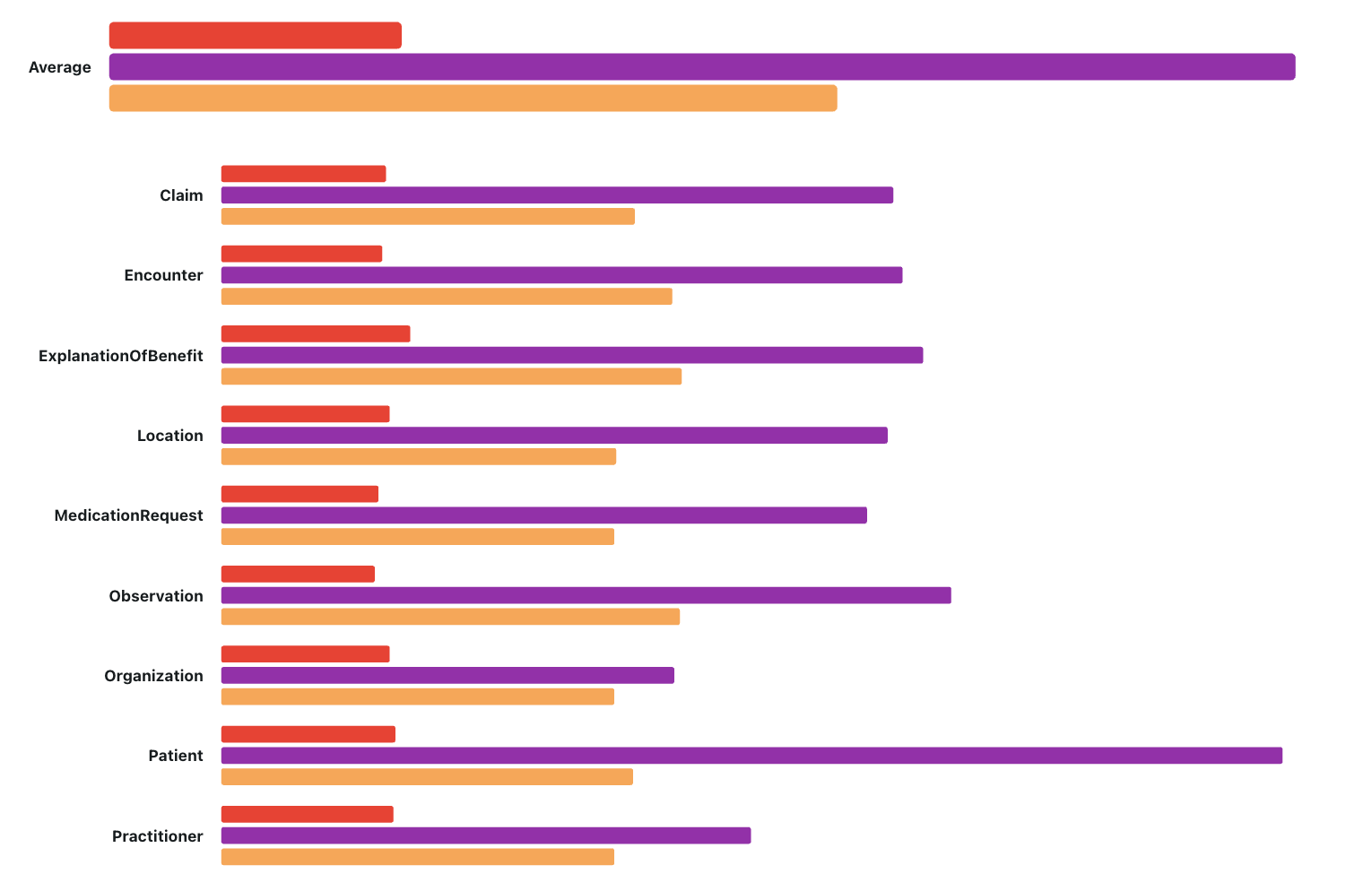

What We're Benchmarking

We will benchmark several popular open-source FHIR servers and compare them against Aidbox

For each server, we'll measure:

- Throughput — operations per second under sustained load

- Latency — p99 response times

- Resource consumption — CPU, memory, and I/O utilization

- Disk usage — how much storage each server requires for the same dataset

We designed the test suite to capture how performance behaves both on a clean database and after significant data volume. This is critical — many servers perform well on small datasets but degrade as data grows.

Stage 1: Empty Database

Starting from a fresh installation:

- Measure CRUD operations performance baseline

- Batch import 1,000 synthetic patient records (generated with Synthea)

- Evaluate different search operations performance

This establishes the baseline — the best-case scenario for each server.

Stage 2: Load 100K Patients

Import 100,000 synthetic patient records and measure:

- Total import duration

- Database size on disk

- Resource consumption during import

This simulates a realistic mid-size deployment and reveals how each server handles sustained write pressure.

Stage 3: Incremental Load Testing

With 100K patients already in the database:

- Re-run CRUD operations — compare against the empty database baseline

- Import an additional 1,000 patient records on top of the existing 100K

- Re-run search operations — measure how query performance changes with data volume

The delta between Stage 1 and Stage 3 tells the real story: how well does performance hold up as data grows?

Stay Tuned

We'll publish all benchmarks, test scripts, and raw results in upcoming posts. Follow us on LinkedIn so you don't miss the updates.

Have questions about FHIR server performance? Contact us — we've been optimizing Aidbox for large-scale deployments for over a decade.