Data ingestion is one of the first major hurdles for any FHIR adopter — whether you're just getting started, spinning up a new environment, or migrating from an existing server. When ingestion is poorly designed, teams run into slow migrations, broken references, and systems that degrade under load.

This is part of a series of articles we're publishing on FHIR data ingestion into Aidbox. Each article covers a specific aspect of the problem. In this one, we focus on Aidbox HTTP Queue: how it works and what to be aware of.

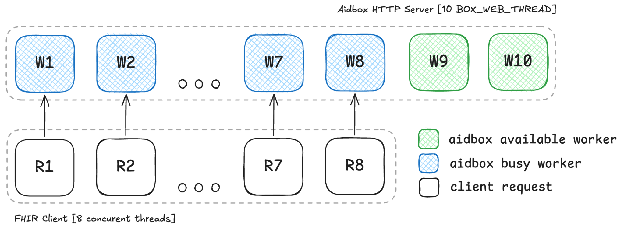

Aidbox can be treated as an asynchronous, non-blocking web server built around a request queue and a fixed number of worker threads (BOX_WEB_THREAD). Every incoming request is first placed into the queue and then picked up for processing.

When the incoming request rate exceeds the number of available workers, requests begin to accumulate in the queue. As a result, the server stops responding in real time and latency increases. Under sustained load, some requests may not be processed in time and will eventually fail due to client-side timeouts.

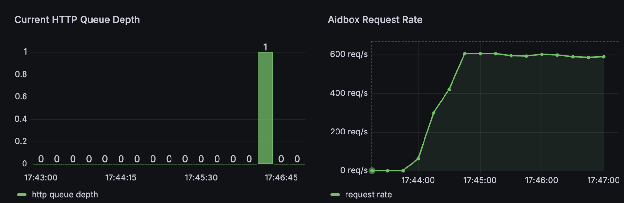

The presence of items in the HTTP queue indicates that the system is under pressure and receiving more concurrent requests than it can immediately handle. A near-zero queue typically corresponds to low latency, while a small queue can be acceptable and even beneficial for efficient resource utilization. However, sustained growth in queue length or queue time is a strong signal of overload — it means that workers are continuously busy and cannot keep up with the incoming traffic.

To illustrate these dynamics with concrete metrics, we have developed three distinct technical scenarios. By analyzing these examples, we can identify effective strategies for avoiding common pitfalls and establishing a high-performance client-server communication model. You will find all the practical recommendations at the end of the article.

All the scenarios are based on the same workload profile to ensure comparability:

- Fixed dataset of 1200 bundles (e.g., 100 per batch)

- Bundle size and structure remain constant across tests

- The client continuously sends requests up to the configured concurrency limit

This allows us to isolate the effect of concurrency and timeout behavior on the HTTP queue without introducing variability from the workload itself.

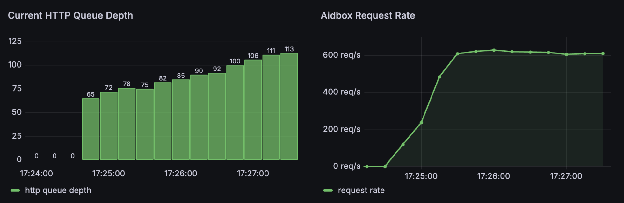

Scenario 1: Unbounded Concurrency + Short Client Timeout

In this setup, Aidbox is configured with 8 worker threads (BOX_WEB_THREAD) running on a single CPU, while the client generates up to 64 concurrent requests with a timeout of 10 seconds. At first glance, this may look like a reasonable load flow, but in practice it creates a classic overload pattern driven by mismatched concurrency and timeout behavior.

Because the incoming rate is higher than the processing rate, the queue begins to grow. This directly impacts latency ≈ (queue time + processing time): each request now has to wait longer in the queue before being picked up by a worker. Eventually, the waiting time exceeds the client's timeout threshold of 10 seconds.

At this point, the following behavior occurs: the client closes the connection and considers the request failed, but the server does not cancel the work. The request remains in the queue (or is already being processed) and continues consuming resources until completion. From the server's perspective, it is still doing useful work — but from the client's perspective, that work is already irrelevant.

As timeouts accumulate, the client starts issuing retries, which generate even more requests. These new requests are added on top of the already growing queue, which still contains stale work from previously timed-out requests. This creates a positive feedback loop: more load leads to more timeouts, which triggers retries and further increases the load.

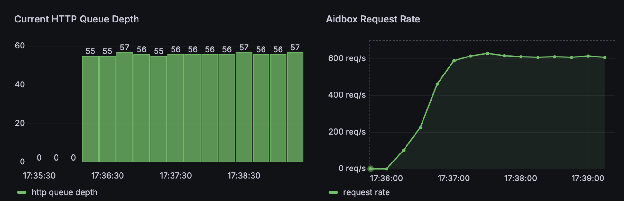

Scenario 2: High Concurrency + No Client Timeouts

In this configuration, the server setup remains the same — 8 worker threads on a single CPU — while the client continues to send up to 64 concurrent requests. The key difference is that the client does not enforce any timeout, meaning it is willing to wait indefinitely for a response.

Just like in Scenario 1, the incoming request rate exceeds the server's processing capacity. Requests are placed into the HTTP queue, and workers process them at a fixed rate. However, because the client does not time out, no requests are abandoned and no retries are generated.

As a result, the system reaches a steady state. The queue grows initially but eventually stabilizes at a level roughly equal to the difference between incoming concurrency and available workers (in this case, around ~56 queued requests). Instead of a runaway queue, we now have a constant backlog.

From the outside, this looks much healthier: there are no timeouts, no errors, and no retry storms. However, this stability comes at the cost of latency. Each request must wait in the queue before being processed, which significantly increases end-to-end response time.

Importantly, throughput (RPS) remains unchanged compared to Scenario 1. The system is still bounded by the number of worker threads and CPU capacity. The only thing that changed is how the excess load is handled: instead of being dropped (via timeouts), it is absorbed into latency.

This means the system is operating at constant saturation — all workers are continuously busy, and the queue serves as a buffer that smooths out the overload at the expense of responsiveness.

In this steady-state configuration, every incoming request is forced to pass through the existing backlog (roughly ~50 queued requests) before it can be processed. The queue becomes a latency barrier for all traffic.

Because the queue is shared, all types of requests, including critical ones such as health checks or user-facing operations, are subject to the same delay. While requests are not dropped, their success depends on whether they can tolerate this accumulated wait time.

This creates a subtle but important risk: the system appears stable, but any increase in latency sensitivity (e.g., introducing timeouts or adding more traffic) can quickly push it into failure mode.

Scenario 3: Matched Concurrency to Worker Capacity

In this configuration, the client concurrency is aligned with the server's processing capacity: 8 concurrent requests against 8 worker threads on a single CPU. Unlike previous scenarios, the system is no longer overloaded — the incoming request rate closely matches what the server can handle.

Because of this alignment, requests are picked up by workers almost immediately after arriving. The HTTP queue remains close to empty, and queuing delay is effectively eliminated. Each request flows through the system with minimal waiting time, resulting in low and predictable latency.

From a throughput perspective, the system performs at its natural limit: RPS matches the worker capacity, and there is no artificial inflation or degradation caused by retries or backlog. The system appears stable, efficient, and well-balanced.

However, this setup comes with an important limitation. Since all workers are continuously occupied with client traffic, there is no spare capacity to absorb additional load. Any external requests, such as health checks, background jobs, or user-driven traffic, will immediately compete for the same workers and begin to queue.

In other words, the system operates at full utilization with zero buffer. While this minimizes latency under controlled conditions, it makes the system fragile in real-world environments where traffic is rarely perfectly predictable.

This setup is close to ideal in terms of efficiency — the system processes requests with minimal latency and no queue buildup. However, it operates right at the edge of its capacity.

With all workers continuously occupied, there is effectively no buffer to absorb any additional load. Even a small increase in traffic or the presence of auxiliary requests will immediately push the system into queueing and increased latency.

In other words, the system is perfectly balanced — but tightly so, leaving no margin for variability or real-world conditions.

Recommended Load Pattern

This model is intentionally simplified and not an exact representation of how the system behaves in practice, but it is useful for building intuition. In reality, a single worker can sometimes handle more than one request concurrently, depending on CPU availability and the nature (size and complexity) of the workload.

As a rule of thumb, it helps to think in terms of alignment: the number of concurrent client requests targeting Aidbox should generally stay below the number of configured web threads. The more auxiliary or side traffic (health checks, background jobs, integrations) the system needs to handle, the more headroom you should leave — meaning fewer client-side concurrent requests relative to available workers.

In other words, not all workers should be "reserved" for a single traffic source; some capacity should remain available for everything else the system needs to process.

Effective configuration relies on robust observability. By monitoring key metrics such as request volume, queue depth, latency, RPS, and timeouts, you can identify the optimal balance for your specific workload. This data-driven approach allows you to precisely tune the number of Aidbox workers and allocated CPU resources for your environment.

We will explore further aspects of Aidbox scalability and workload management in upcoming articles.

How to configure this in Aidbox

If you're working with high-throughput FHIR ingestion, you can tune worker configuration in Aidbox. However, there is much more to performance tuning than just increasing the number of workers — we'd recommend checking out the article explaining how web workers and database (db) connections together contribute to Aidbox performance.

Spin up your own Aidbox instance in seconds to continue experimenting or reach out to discuss this setup.